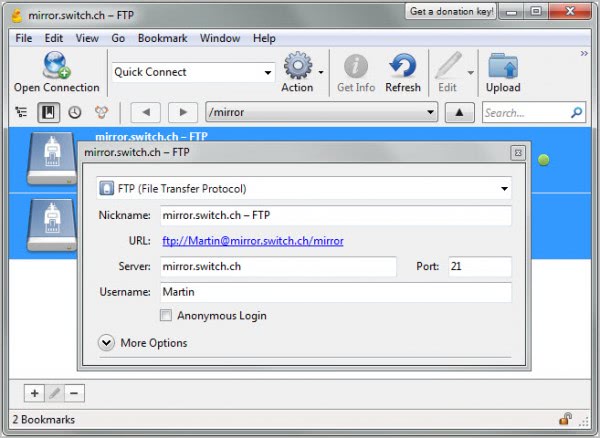

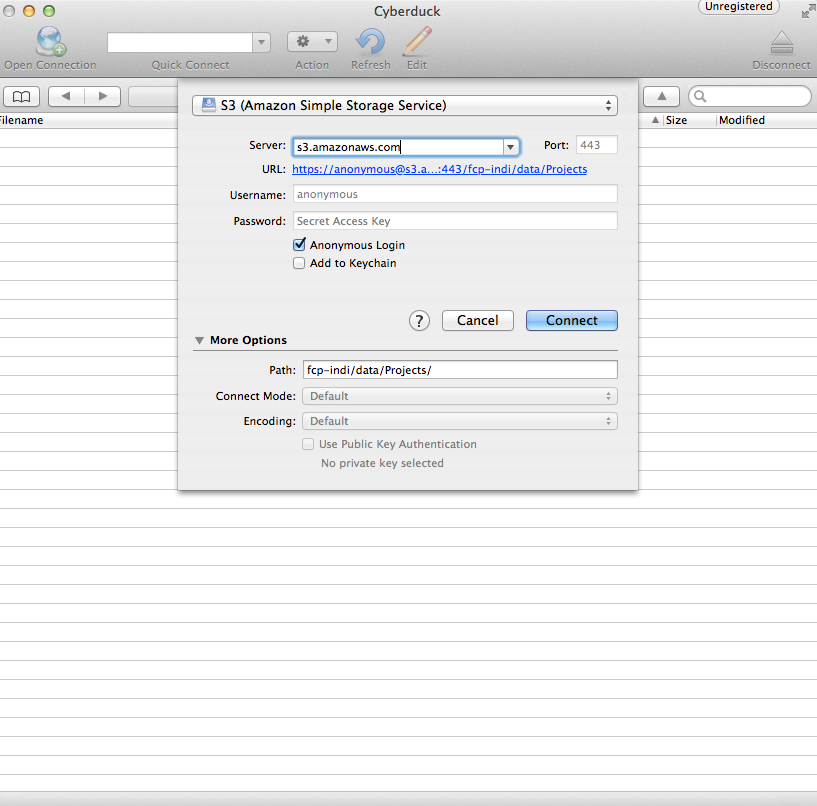

Partial Fail: Success rows will be archived, while failed rows will go to “error” folder with appended datetime stamp in S3 bucket. Once the S3 Ingest Job runs from Administration -> Connectors -> S3 a few scenarios can happen with the file after ingestion:Ĭomplete Pass or File: File will be moved from “input” folder or “archived” appended with a datetime stamp in S3 Bucket Put your yet-to-be-uploaded files in input. Bugfix Fail to list directory with equals symbol in path (S3) Bugfix Failure to launch program (CLI Linux) 5.0.Once your CSV file is ready to upload, drag and drop it in the input directory of the S3 bucket.Note: When using Cyberduck, you must copy the Bucket Access Path after s3:// (start with the text that reads gsext) 1c67bf1 created the issue 7.1.2 works without issues 7.2.0 onwards keeps getting an error on just simply listing a bucket contents Request Error: null. Caution: Some S3 clients require being able to list all buckets and will fail without this policy. In the Cyberduck tool, you'll map the information from Gainsight to Cyberduck. Apply it preferably to a user group, or to individual users.Find a partner Work with a partner to get up and running in the cloud. Your screen will then look like below after clicking “View S3 Config” The Wave Content to level up your business.From there, click View S3 Config in the top right corner to find the necessary information to load into Cyberduck. It has aid for FTP, SFTP, WebDAV, Amazon S3, Google Cloud Storage, Rackspace Cloud Files, and different connections, and it can be operated on Windows and Mac, OS X. Here '/' is necessary at the end of folder name, else you will get only folder name in result. If you are not using the restricted option (logical directories) for your User and you try to list the root '/' the operation will give an Access Denied if you do not have permissions to list all the buckets (s3:ListAllMyBuckets). Cyberduck 8.5.9 Crack is a free and open-source file transfer protocol (FTP) software to help you in connecting to distant servers so you may also get hold of or add data.

S3 & OpenStack Swift browser for Mac and. Hello, My cyberduck keeps giving me an error message that I have a listing directory/failed and says access denied. You can also get list of objects by using aws-cli. Previous message: Cyberduck-trac Cyberduck 8712: Listing directory / failed on synchronize only. You will find the necessary data in Administration > Connectors > S3 Connector. I hope provided link will answer your question.From here, you will need to fill in some data, including Username, Password and Path: Once in Cyberduck, you will need to select S3 (Amazon Simple Storage Service).For the purpose of this document, we will focus on a very common tool called Cyberduck, which you can download for free here: To upload files into the S3 Bucket or for troubleshooting, you can use your preferred S3 access tool.Email enable your S3 connector if you are unable to see your S3 connector information.I kept digging around and found that Amazon recommends to use multipart uploads for all files over 100M ( ), which I guess what cyberduck is done.Īll I had to do in the end is to add missing permissions (ListMultipartUploadParts and ListBucketMultipartUploads) to enable the multipart uploads. "Resource": "arn:aws:s3:::photoshoot2016/*" Cyberduck 8.5.9 Crack With Serial Key Mac Free Download 2023 Cyberduck 8.5.9 Crack is a free and open-source file transfer protocol (FTP) software to help you in connecting to distant servers so you may also get hold of or add data. "Resource": "arn:aws:s3:::photoshoot2016" Most likely, the SFTP server is unable to create the root path because the parent directory has not sufficient permissions to allow the operation. How is my policy restricts large file uploads? What am I missing? Supported S3 Operations on Buckets GET Bucket (List Objects) version 2. So the I definitely have a read/write access.Īlso if I add a pre defined policy (AmazonS3FullAccess), then uploading of large files also works OK. However uploading small files (around 200M) is not an issue, also I have no issue creating new folders and files with cyberduck and the same login credentials. please contact your web hosting service provider

When I use a custom policy below, I get the following error when uploading large files (1G and over) using cyberduckįile access denied. a11fab0 created the issue When connecting to a bucket with security policies whitelisting specific paths: Older versions (tested on 6.7.0) allow connections to an S3 bucket, landing on a specific w.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed